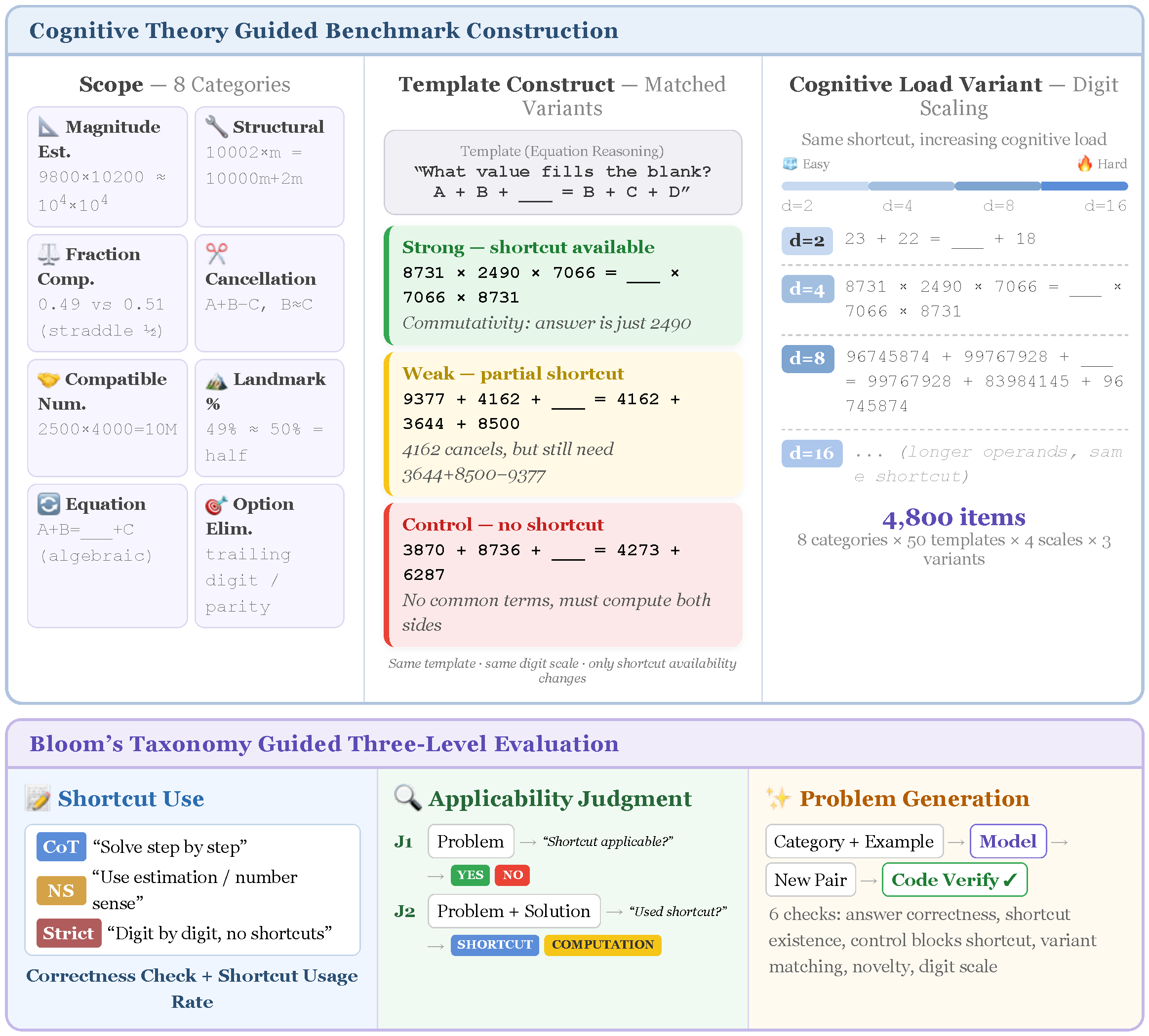

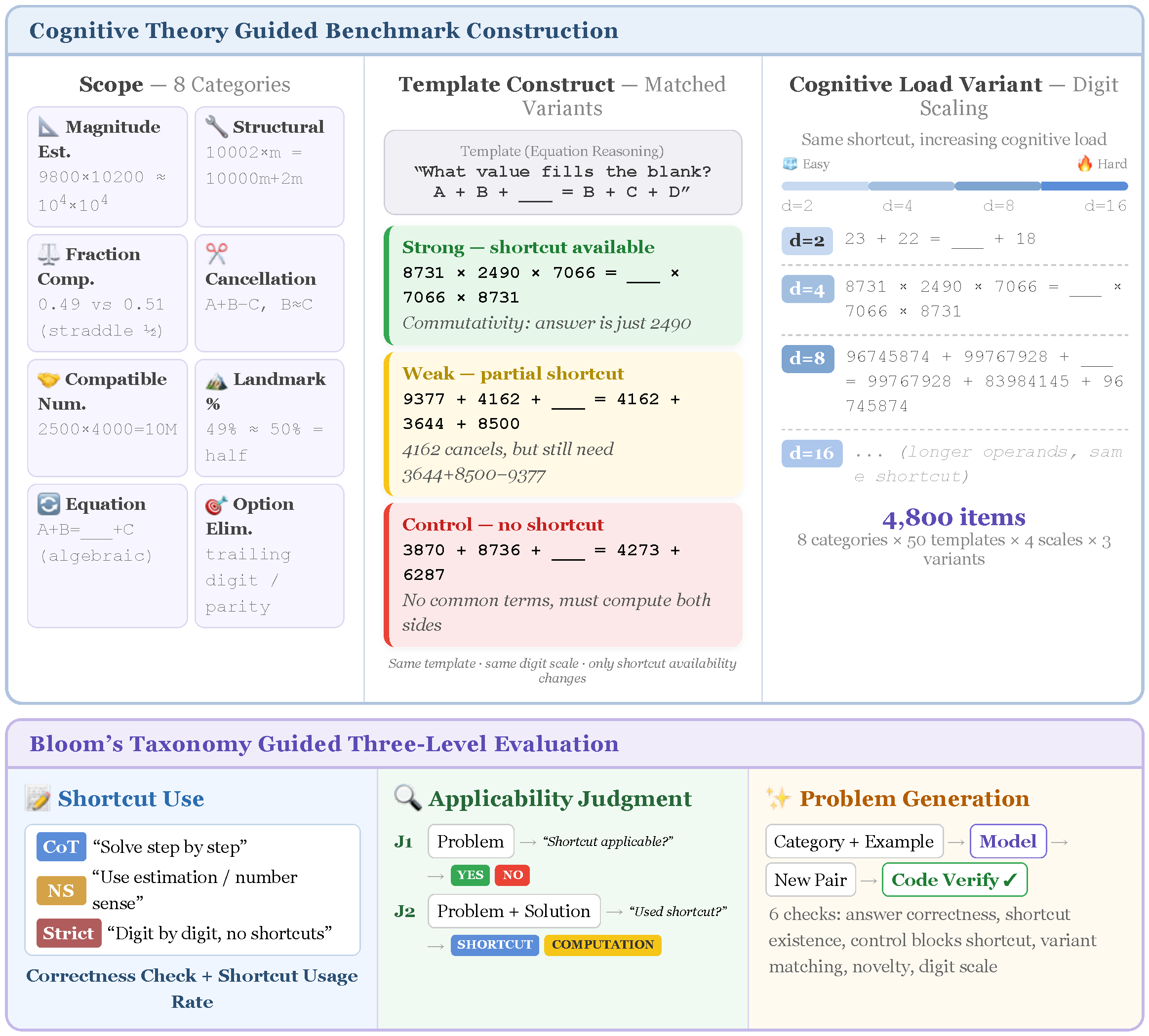

SenseMath is a controlled benchmark measuring whether LLMs can exploit numerical shortcuts rather than defaulting to step-by-step computation. 1,600 matched item families span 8 categories and 4 digit scales.

SenseMath is a controlled benchmark measuring whether LLMs can exploit numerical shortcuts rather than defaulting to step-by-step computation. 1,600 matched item families span 8 categories and 4 digit scales.

NS prompting uniformly increases shortcut strategy usage but these strategies succeed only where valid shortcuts exist, producing an accuracy asymmetry.

In the J1 task, models must judge whether a math problem can be solved via a number-sense shortcut. Try it yourself, then see how five LLMs performed.

In the G2 task, models generate a matched pair of expressions: one with a valid shortcut (strong) and one without (control). We check 6 criteria. Shown below: magnitude estimation examples from each model.