SenseMath: When Number Sense Helps Numerical Reasoning in Large Language Models

Published:

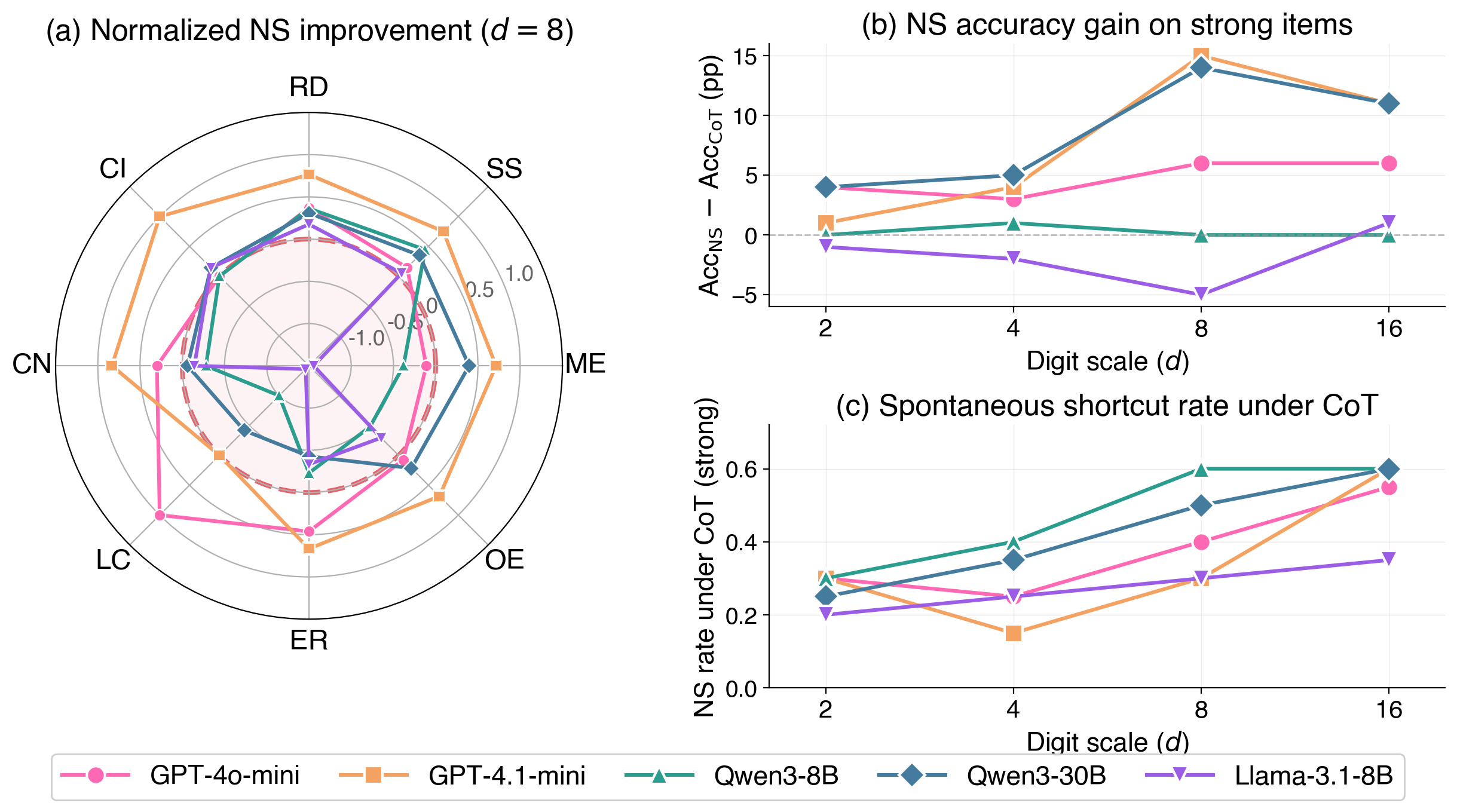

Large language models often default to step-by-step computation even when efficient numerical shortcuts are available. We introduce SenseMath, a controlled benchmark for evaluating structure-sensitive numerical reasoning in LLMs. SenseMath contains 4,800 items spanning eight shortcut categories and four digit scales, with matched strong-shortcut, weak-shortcut, and control variants. It supports three evaluation settings of increasing cognitive demand: Shortcut Use (whether models can apply shortcuts), Applicability Judgment (whether they can recognize when a shortcut is appropriate), and Problem Generation (whether they can generate new shortcut-amenable problems).

Our evaluation across five LLMs shows that when explicitly prompted, models readily adopt shortcut strategies and achieve substantial accuracy gains on shortcut-amenable items (up to 15%), yet under standard chain-of-thought prompting they spontaneously employ such strategies in fewer than 40% of cases. Moreover, models systematically over-generalise shortcuts to problems where they do not apply, and fail to generate valid shortcut-bearing problems from scratch. These results suggest that current LLMs exhibit procedural shortcut fluency without the structural understanding that underlies human number sense.

[Project Page] [Code] [Dataset]