Reliable Control-Point Selection for Steering Reasoning in Large Language Models

Published in arXiv, 2026

Recommended citation: Haomin Zhuang, Hojun Yoo, Xiaonan Luo, Kehan Guo, Xiangliang Zhang. (2026). "Reliable Control-Point Selection for Steering Reasoning in Large Language Models." arXiv:2604.02113. https://arxiv.org/abs/2604.02113

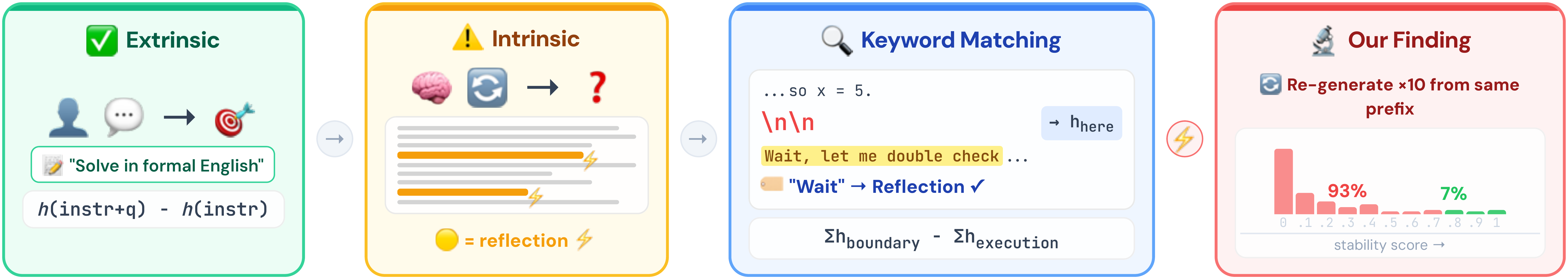

Steering vectors offer a training-free way to control reasoning behaviors in LLMs, but existing methods detect behavioral boundaries through keyword matching—treating every match as a genuine signal. We find that 93.3% of keyword-detected boundaries are behaviorally unstable: the model fails to reproduce the detected behavior when re-run from the same prefix. We develop a probabilistic model of intrinsic reasoning behaviors and propose stability filtering, which retains only high-probability boundaries. Combined with content-subspace projection, this achieves 0.784 accuracy on MATH-500 (+5.0 over SEAL), and the vectors transfer across models without re-extraction.

| Paper | Code |

Recommended citation: Haomin Zhuang, Hojun Yoo, Xiaonan Luo, Kehan Guo, Xiangliang Zhang. (2026). “Reliable Control-Point Selection for Steering Reasoning in Large Language Models.” arXiv:2604.02113.